Peer moderated group marks

Peer assessment forms part of the assessment strategy within many courses at Edinburgh Napier University. One such course is Project Evaluation led by lecturer Robert Mason, School of Engineering and the Built Environment. The module is focused upon generic skills as a built environment professional. Each professional carries out a role you would have within industry. The peer assessment is worth 25% of a 40 credit module therefore it is an important skill for students to have.

Peer assessment forms part of the assessment strategy within many courses at Edinburgh Napier University. One such course is Project Evaluation led by lecturer Robert Mason, School of Engineering and the Built Environment. The module is focused upon generic skills as a built environment professional. Each professional carries out a role you would have within industry. The peer assessment is worth 25% of a 40 credit module therefore it is an important skill for students to have.

A well known criticism of assessed group work is that each student receives the same team mark, regardless of individual performance. In his discussions about peer assessment with students and possible mechanisms for assessing contribution in the workplace, Robert highlighted the fact that some people will naturally lead while others ‘lurk’ within a group situation giving no contribution. Academic staff cannot make judgements about individual contributions or how much contribution each has made to the final group outcome as they do not supervise the group contribution element.

Discussing this challenge with Learning Technology Support Manager, Stephen Bruce it was proposed that use of WebPA would allow students to enter the data concerning group members’ contributions and academic staff to receive the assessment outcome generated by WebPA. WebPA is an online automated tool that facilitates peer moderated marking of group work.

Prior to using WebPA Robert created multiple spreadsheets to capture data and then combined results within a calculation in order to assess group participation. When working with large student cohorts that amounts to a lot of data entry and time commitment. Calculating the marks and returning those to the gradebook involved several spreadsheets and complex calculations.

Once the webPA assessment is set up data is entered by the students and the overall outcome is generated by WebPA.

What do I have to do to set up WebPA?

Firstly a good place to start is having a chat with your Learning Technology Advisor..

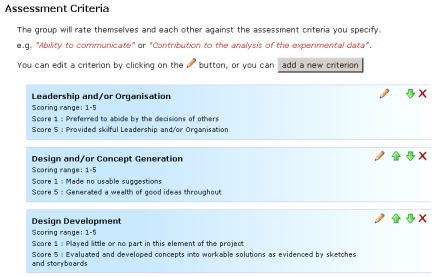

Criteria have to be created for students use in their assessment of each other’s contribution. Robert drew up the criteria, talking to students about the various standards and placing the students own feedback in relation to possible criteria within the WebPA criteria. Students had to complete two group presentations and the marks for which were fed into the webPA calculation.

Criteria were also informed through work done by J Cowan Heriot Watt University on the ‘sound standard’, that being the level of academic expectations within a group context. John Cowan talked to students years ago about what students’ expectations of contribution would be, bringing about the criteria of attendance, quality of work, engagement etc. From this, at the end of project, students assess their own contribution and that of others in the group

Working with the Learning technology Advisor, the WebPA assessment was set up in Moodle, the criteria applied within WebPA and start and end dates enabled.

Recommendations

“Benefits were without doubt the time saving overall”.

Robert would definitely use this tool again where peer assessment is part of the assessment strategy for a module, and particularly where summative assessment is involved. Where formative assessment is concerned Robert would want to encourage students to discuss the process as part of the learning experience.

Perhaps the greatest challenge was getting the students to understand the mechanism by which their assessment of their peers contribution impacted upon the overall group outcome. This is an area which Robert feels would need greater attention when using WebPA with another cohort.