Music recommender systems are used by music streaming platforms to provide listeners with personalised recommendations for new artists and songs. These algorithmic systems use dynamic data modelling, and add to existing recommendation sources such as word of mouth, journalism, TV and radio, and live events. This research investigated the extent to which user responses to recommendations may vary from source to source.

The first stage of our study collected data about participants’ preferences between recommendations from algorithmic, editorial and peer sources. The second stage asked participants for their opinions on three sets of tracks which appeared to have been recommended by each of the sources. However, the tracks in all three sets were generated using the same process. To maintain the perception that some tracks had been recommended by their peers, participants were recruited in pairs of friends.

We recruited 28 participants who completed a survey providing 10 song-recommendations for their partners. Using the songs suggested by participants, we created three playlists of five songs for each participant labelled ‘algorithm’, ‘editorial’ and ‘peer’. To do this, we created a new Spotify account and a new playlist with 15 songs for each participant. Each participants’ playlist was ‘seeded’ from the ten songs they suggested in the sign-up survey and five songs that their partner had suggested for them. We then took the next fifteen songs that Spotify recommended for this specific playlist and ‘labelled’ each in turn as ‘algorithm’, ‘editorial’, or ‘peer’, so that each participant received a playlist with 15 new songs.

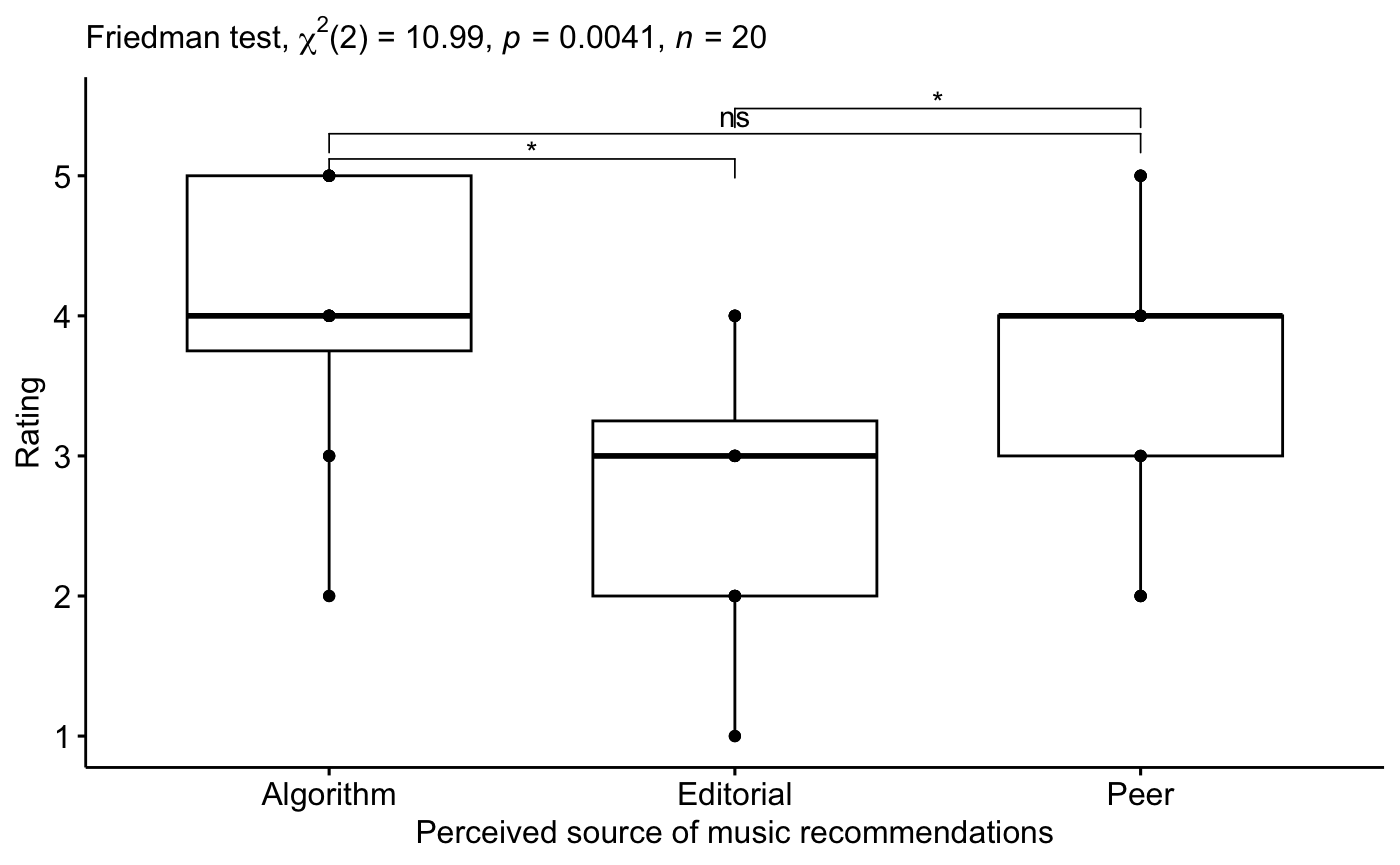

We compared participants’ ratings of their preferred source of music recommendations (‘algorithm’, ‘editorial’ and ‘peer’). Our results suggest that there is an overall significant difference in participant ratings across the perceived sources of music recommendations, although the effect size is small.

To discover whether there were differences in ratings for specific perceived sources of music recommendations, we then conducted a post-hoc analysis. The results suggest that there is a significant difference, with a large effect size, between participants’ ratings for ‘algorithm’ and ‘editorial’, and between their ratings for ‘editorial’ and ‘peer’. There was no significant difference between their ratings for ‘algorithm’ and ‘peer’. These results suggest that participants are significantly more open to algorithmic recommendations than to editorial recommendations, and significantly more open to peer recommendations than to editorial recommendations.

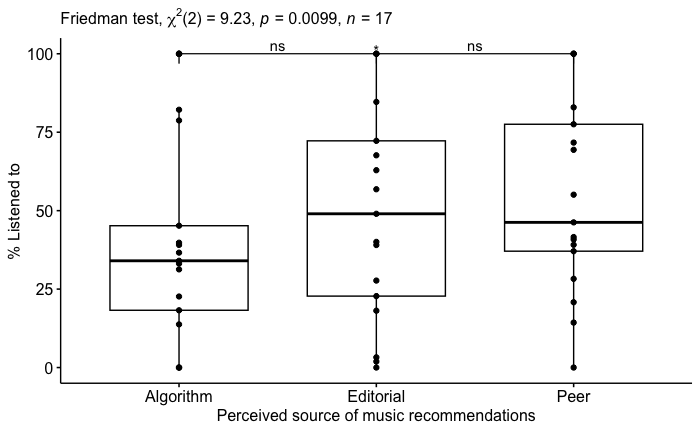

We then compared the time participants spent listening to each playlist. Results indicate that there is an overall significant difference time spent listening to each playlist, but the effect size is small. Post-hoc pairwise comparisons indicate that there is a significant difference, with a large effect size, between the time spent listening to the ‘algorithm’ playlist and the time spent listening to the ‘peer’ playlist.

There was no significant difference between the time participants spent listening to the ‘algorithm’ and ‘editorial’ playlists, nor between the time listening to the ‘editorial’ and ‘peer’ playlists. This indicates that participants spent significantly more time listening to the ‘peer’ playlist than to the ‘algorithm’ playlist, and so that people are more open to peer-based recommendations than to algorithmic recommendations. This is contrary to the results from our analysis of data from the sign-up survey.

Our results highlight a difference between participants’ reported openness and actual openness to different sources of music recommendations. That is, participants reported a preference for both algorithmic and peer recommendations, compared to professionally curated (‘editorial’) recommendations. However, participants spent a greater amount of time listening to ‘peer’ playlists compared to ‘algorithm’ playlists. There were no significant differences in participants’ willingness to listen again to tracks included in each playlist, willingness to listen to other tracks of the same artists included in each playlist, and willingness to spend or purchase based on each playlist.

This preference for peer recommendations indicates that ‘word-of-mouth’ marketing, such as social media and ‘sharing’ promotions and encouragement, might be an effective means for emerging artists to encourage people to listen to their music. However, while this might increase exposure and indirect income from platform revenue shares, recommendation sources do not influence listeners’ opinion of the music itself. Therefore, peer recommendations may support stronger direct music, ticket, or merchandise sales. This may be achieved by increasing access to individuals who are willing to listen, but none of the recommendation sources appears to make it more or less likely that an exposed listener will go on to engage further.

Future work, investigating which listeners choose to share and promote content amongst their peers and the most effective means to encourage this, may offer insights into how emerging artists may increase their exposure and audiences, and discover how to encourage further listener-engagement.

This study was funded by a Creative Informatics Small Research Grants.

Image by vectorjuice on Freepik

Leave a Reply